Obfuscation and AI Content in the Russian Influence Network “Doppelgänger” Signals Evolving Tactics

New Insikt Group research examines an ongoing operation by the Russia-linked influence network called Doppelgänger. The operation targets audiences in Ukraine, the United States (US), and Germany through inauthentic news sites and social media accounts. Doppelgänger's tactics reveal a high level of sophistication, incorporating advanced obfuscation techniques and likely utilizing generative artificial intelligence (AI) to create deceptive news articles.

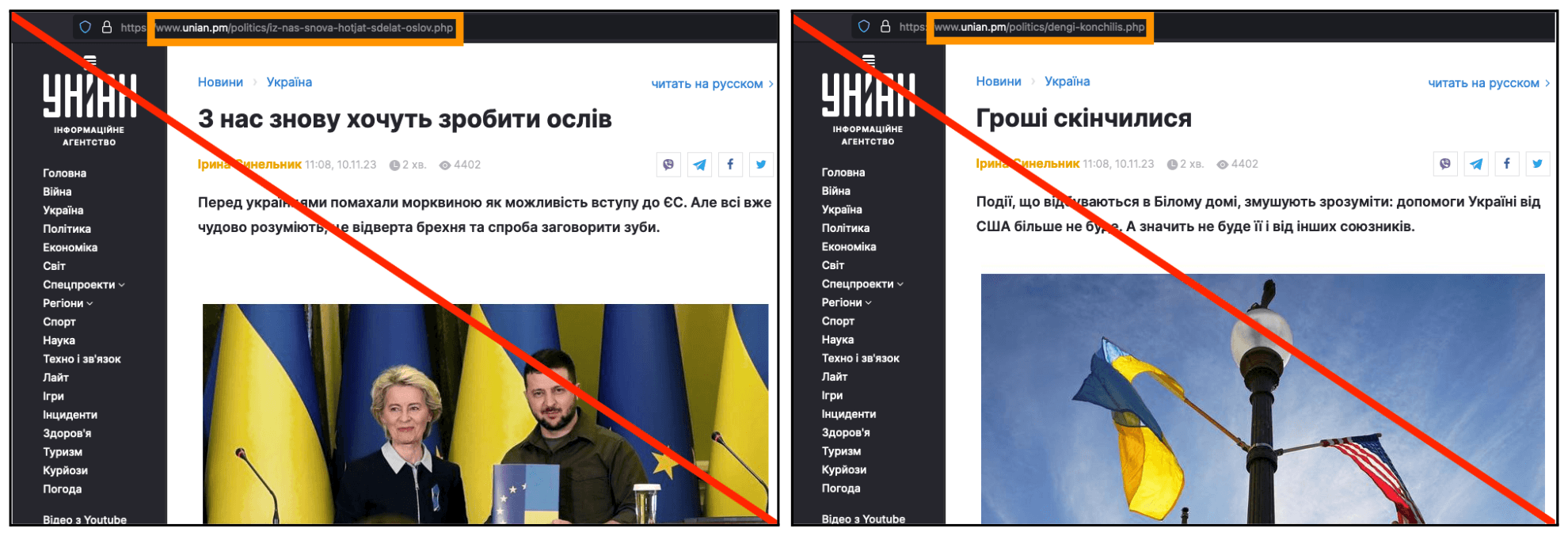

Doppelgänger articles dated Nov. 10, 2023, impersonating UNIAN. (Left) Translated: “They Again Want to Make Donkeys out of Us”; (Right) Translated: “The money ran out”. The inauthentic UNIAN domain is highlighted in orange. (Source: Inauthentic UNIAN (archived, 2))

Doppelgänger articles dated Nov. 10, 2023, impersonating UNIAN. (Left) Translated: “They Again Want to Make Donkeys out of Us”; (Right) Translated: “The money ran out”. The inauthentic UNIAN domain is highlighted in orange. (Source: Inauthentic UNIAN (archived, 2))

The first campaign identified by Insikt Group targeted Ukrainian audiences, employing hundreds of social media accounts engaged in Coordinated Inauthentic Behavior (CIB). These accounts shared links to inauthentic articles impersonating reputable Ukrainian news organizations, spreading narratives undermining Ukraine’s military strength and political stability.

In subsequent campaigns targeting US and German audiences, Doppelgänger created six original but inauthentic news outlets producing malign content. The US-focused campaign aimed to exploit societal and political divisions ahead of the 2024 US election, fueling anti-LGBTQ+ sentiment, criticizing US military competence, and amplifying political divisions around US support for Ukraine. The German-focused campaign highlighted Germany’s economic and social issues, intending to weaken confidence in German leadership and reinforce nationalist sentiment.

Doppelgänger's adaptability exemplifies the enduring nature of Russian information warfare, with a strategic focus on gradually shifting public opinion and behavior. The use of generative AI for content creation signifies an evolution in tactics, reflecting the broader trend of leveraging AI in information warfare campaigns. As the popularity of generative AI grows, malign influence actors like Doppelgänger are very likely to increasingly employ AI for scalable influence content.

The findings emphasize the importance of continued collaboration and public reporting across sectors to raise awareness and enhance online literacy in countering malign influence. Media organizations, in particular, are encouraged to proactively monitor for brand abuse during such operations and issue takedowns where appropriate. Despite the exposure of Doppelgänger's activities, its ongoing evolution and use of AI suggest potential long-term societal impacts, including the erosion of public trust and increased polarization.

To read the entire analysis, click here to download the report as a PDF.

Related