Machine Learning: Practical Applications for Cybersecurity

Key Takeaways

- Despite what you’ve seen in the movies, machines are not about to replace the need for human intelligence.

- The future of cybersecurity isn’t about man OR machine — it’s about man AND machine. In chess, a team of amateurs operating even standard desktop PCs dramatically outperforms both the strongest human players and the most powerful supercomputers in isolation.

- The secret to actionable threat intelligence lies in playing to the individual strengths of machines and human analysts. Machines perform the heavy lifting (data aggregation, pattern recognition, etc.) and provide a manageable number of actionable insights. From there, human analysts make decisions on how to act.

- One of the biggest barriers to human intelligence is language. With modern natural language processing, machines can process text irrespective of language, including slang and industry jargon.

- The battle in threat intelligence is balancing time and context. Analysts need intelligence promptly, but they also need enough information to make a decision on how to act. This is only possible using modern AI and machine-learning processes.

If you’ve walked around any security conferences recently, you’ll have heard dozens of vendors talking about artificial intelligence (AI) and machine learning.

But what do they actually do?

Are they really going to usher in a grand age of cybersecurity? And are security analysts about to be collectively out of a job?

Recently, Recorded Future co-hosted a webinar with SANS Institute with the goal of helping security conscious organizations understand how machine learning can help them process an almost infinite number of inputs into a small number of actionable outputs.

The speakers were Chris Pace, technology advocate at Recorded Future, and Ismael Valenzuela, principal engineer at McAfee.

AI vs. Machine Learning

Before jumping into the details, Valenzuela and Pace laid out the difference between AI and machine learning. Put simply, AI is a field of computing, of which machine learning is one part.

Specifically, AI encompasses any case where a machine is designed to complete tasks which, if done by a human, would require intelligence. Within AI there are a variety of technologies, including:

Machine learning — Machines which “learn” while processing large quantities of data, enabling them to make predictions and identify anomalies.

Knowledge representations — Systems of data representation that enable machines to solve complex problems (e.g., ontologies).

Rule-based systems — Machines that process inputs based on a set of predetermined rules.

As the volume and complexity of threats has increased rapidly in recent years, security vendors have begun to leverage one or more elements of AI in an attempt to reduce the burden on human analysts.

Enter the Centaur

One of the most persistent myths about AI is that it will make human analysts redundant in the near future. The reality, however, is quite different. Valenzuela explains:

No matter what we see in the movies, the reality is the intelligence we have in machines today is not comparable to human intelligence. That’s not going to change in the next 20 years. But that’s not to say there aren’t a whole lot of benefits we can extract from AI and machine learning in the field of cybersecurity.

Rather than relying on one or the other, Valenzuela says a combination of human and artificial intelligence dramatically outperforms either in isolation. To illustrate his point, he uses the example of chess.

Back in 1997, the reigning world champion and chess grandmaster Garry Kasparov lost a highly publicized match against IBM supercomputer Deep Blue. Many people thought this would be the “end of chess,” as computers would only become more powerful over time.

Nonetheless, 20 years later, the game is alive and well. Why? Because although computers are able to process massive numbers of moves in a short space of time, they don’t have the ability to think critically or creatively, both of which are essential to winning consistently at the highest levels of chess. It should be noted that while Deep Blue had access to Kasparov’s entire playing history in the run-up to the match, Kasparov himself was denied access to any of the machine’s games.

But fast forward to 2005, and something much more interesting occurred in the chess world. In a freestyle competition, where competitors ranged from teams of grandmasters to a supercomputer, an unlikely winner arose: A team of two amateurs operating three standard desktop PCs.

Despite facing far more gifted competitors, not to mention vastly more powerful computers, the team won for one reason: They had a better process for interpreting and utilizing the information provided by their computers.

“They came to know how to use the combination of human and machine thinking to best effect,” explains Valenzuela. “A weak human player plus a machine plus a good process is superior to a very powerful machine alone, and it’s also superior to a much stronger human player using a supercomputer with a weaker process.”

In the chess world, these “human plus computer” teams are known as “centaurs,” and they dramatically outperform the top humans and computers in isolation.

Computers, after all, are extremely good at specific things, such as automating simple tasks and solving complex equations, but they have no passion, creativity, or intuition. Skilled humans, meanwhile, can display all of these traits, but can be outperformed by even the most basic of computers when it comes to raw calculating power.

The AI revolution, then, has never been about replacing humans with machines, but about machines providing humans with the context and information they need to make informed decisions.

Playing to Your Strengths

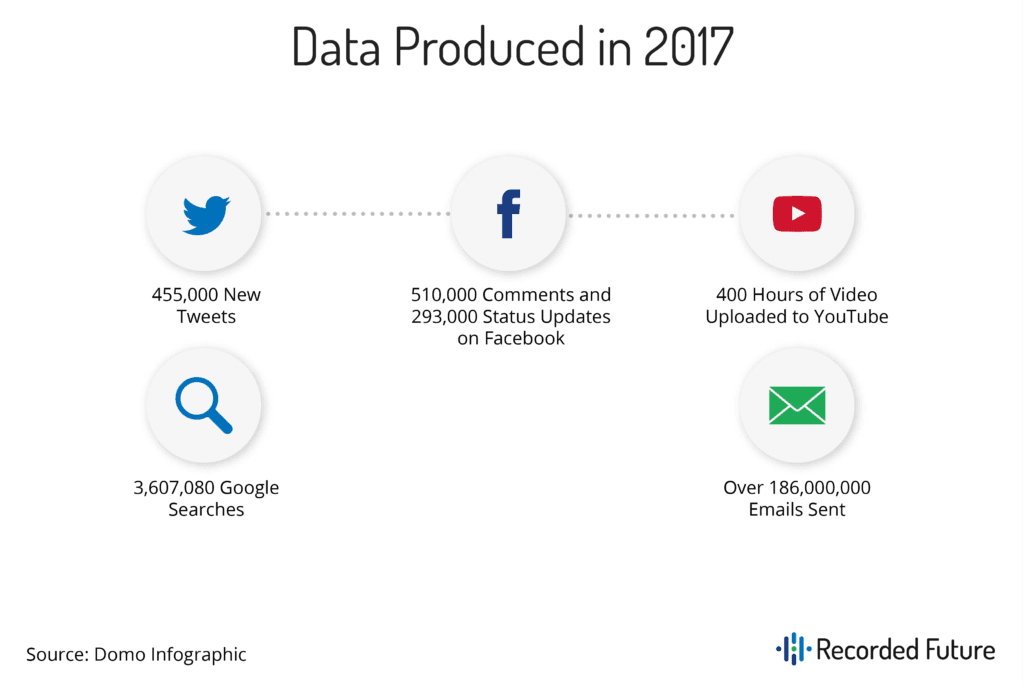

Data has posed perhaps the single greatest challenge in cybersecurity over the past decade. For a human, or even a large team of humans, the amount of data produced daily on a global scale is unimaginable. For every minute in 2017 there were:

Combine this with the massive number of alerts most organizations see from their SIEM, firewall logs, and user activity, and it’s clear human security analysts are simply unable to operate in isolation. Thankfully, this is where machines excel, automating simple tasks such as processing and classification to ensure analysts are left with a manageable quantity of actionable insights.

For instance, AI is routinely used in cybersecurity for the following purposes:

Pattern recognition — Identifying phishing emails based on content or sender info, identifying malware, etc.

Anomaly detection — Spotting unusual activity, data, or processes (e.g., fraud detection for online banking or gambling).

Natural language processing (NLP) — Converting unstructured text such as a webpage into structured intelligence.

Predictive analytics — Processing data and identifying patterns in order to make predictions and identify outliers.

“The key battle in security is balancing time and context,” says Pace. “It’s essential that we respond quickly to security incidents, but we also need to understand enough about an incident to respond intelligently. Machines play a huge role here because they are able to process a massive amount of incoming data in a tiny fraction of the time it would take even a large group of skilled humans. They can’t make the decision of how to act, but they can provide an analyst with everything they need to do so.”

Artificial Threat Intelligence

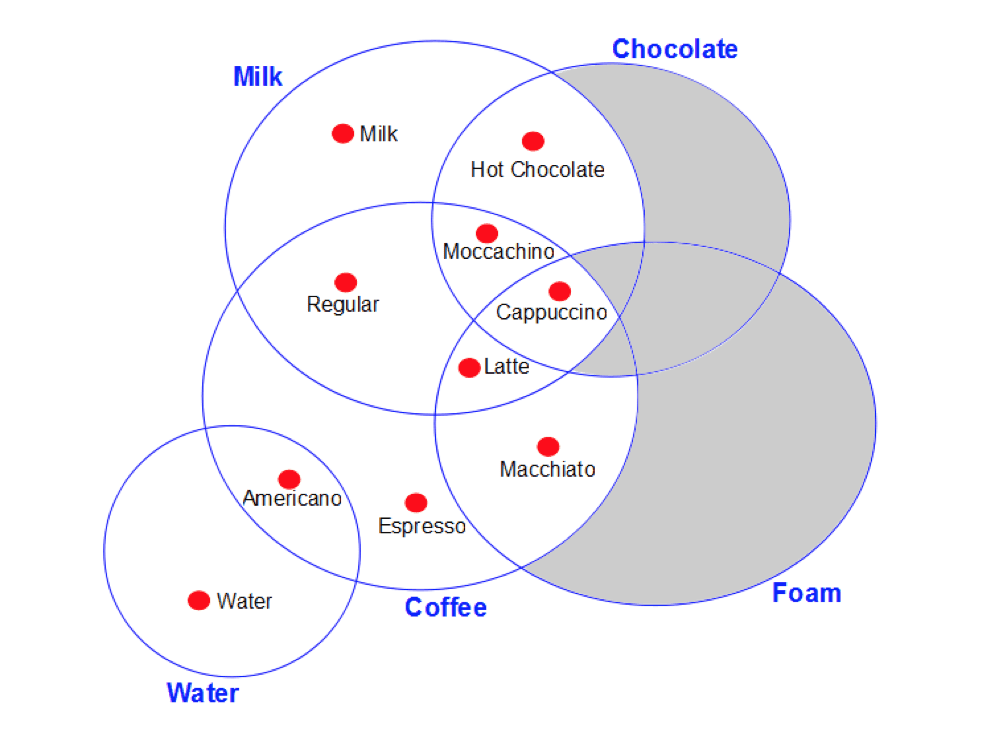

To understand how AI and machine learning can benefit cybersecurity, it helps to have an understanding of how machines make sense of large volumes of data. Typically, they do this using knowledge representations such as ontologies.

Simply put, ontologies are systems made up of distinct “things” known as entities, and their relationships to each other. For instance, the following is a very simple ontology of different types of coffee.

In this case, a simple Venn diagram, the individual ingredients are entities, but they form an ontology which includes a set of relationships. From this ontology, we can determine that a combination of milk, coffee, and foam becomes a latte, for example.

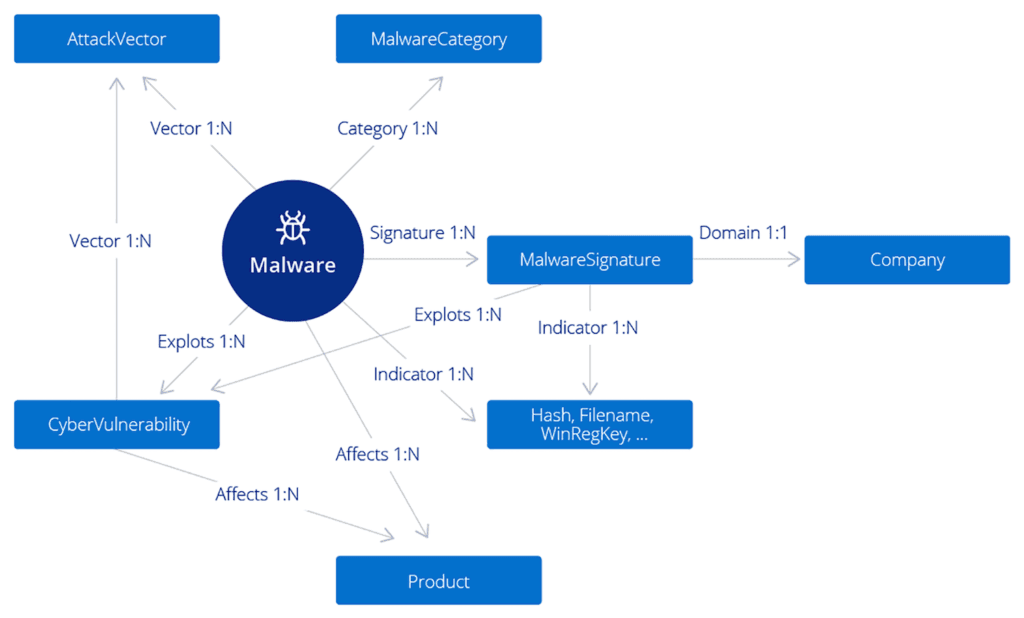

In the realm of cybersecurity, ontologies are used to represent the real world inside a machine-learning environment. Pace explains:

“In this ontology you can see malware sitting at the center, surrounded by various other entities that could relate to that malware. For example, the entity 'MalwareCategory' could be a banking trojan or a worm, and the 'AttackVector' entity might indicate either spam, or sequel injection, or a particular vulnerability the malware is exploiting.”

Using this type of ontology, a machine can begin to “understand” the real world — in this case, the threats faced by a network.

“Of course, in order for a machine to build up a comprehensive picture, it needs to consume data, and classify any data points it recognizes that refer to these particular entities,” Pace says. “From there, the next step is to convert those entities into events, which in turn will have their own classifications, for example, the Attacker, or the Target, or the Method.”

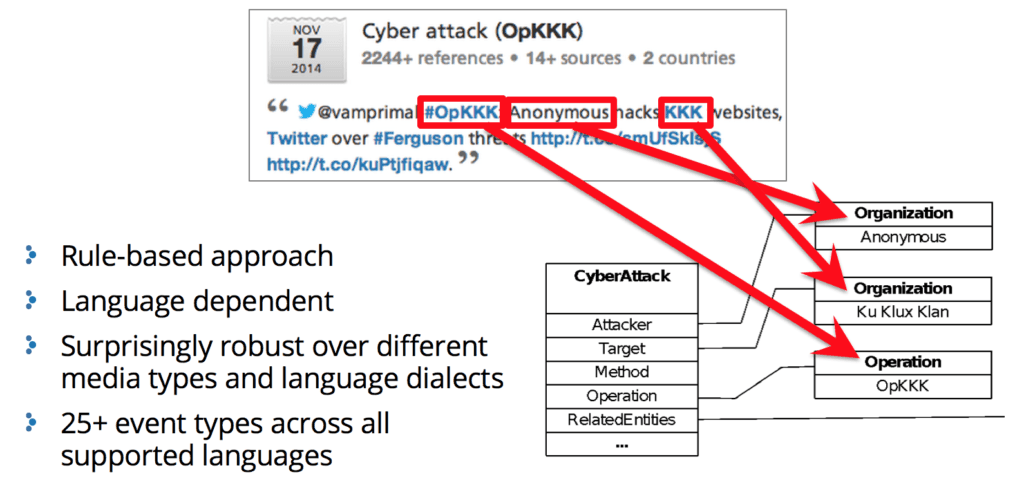

In the image below, you can see an example of how this might work in practice. The data point, in this case a post on Twitter, is being analyzed using a simple rule-based approach.

“This rule-based system is looking to identify entities that would indicate a cyberattack,” Pace explains. “It's able to identify an operation, it's able to identify two organizations, and it may also be able to identify a target, for example.”

This type of system offers huge benefits to the field of cybersecurity, where it can process a huge number of data points very quickly, irrespective of source language.

Breaking the Language Barrier

Unfortunately, there’s another major barrier to producing actionable threat intelligence: Most of the information required by security professionals is buried in unstructured text spread across millions of websites on both the open and dark web.

Natural language processing, also known as NLP, poses huge benefits for cybersecurity because it enables machines to gather and make sense of data irrespective of language, format, and punctuation. Powerful NLP engines are even able to understand common slang and jargon across all languages, something a team of analysts could never aspire to.

Here’s how it works.

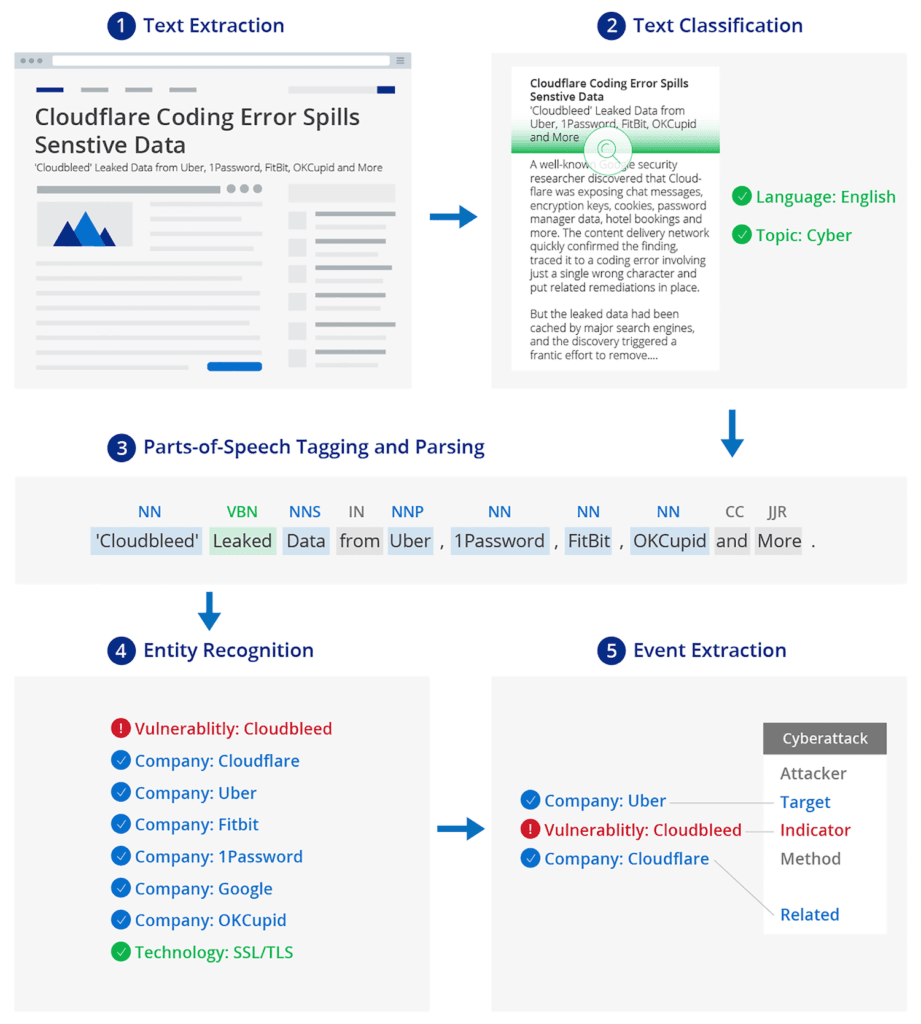

First, text is extracted from a data point — a web page or social media post, for example. From there, a platform such as Recorded Future, which employs NLP, is able to classify the language being used and any cyber topics discussed (e.g., exploits, leaks, etc).

Next, the text is further classified to remove any extraneous words or punctuation, to ensure only valuable inputs will be processed.

From here, Recorded Future is able to use the classified text to determine its content, including any entities mentioned. In this case, Recorded Future identified the Cloudbleed vulnerability, a number of high-profile companies, and a reference to SSL/TLS technology. “To think about practical applications for a moment, if this new reference mentions your company and the terms ‘vulnerability’ or ‘exploit’ or a new cyber event, or in this case a cyberattack, you would know about that instantly,” Pace explains. “You’d be able to decide straight away whether there was anything you needed to do, particularly if the reference included technical indicators.”

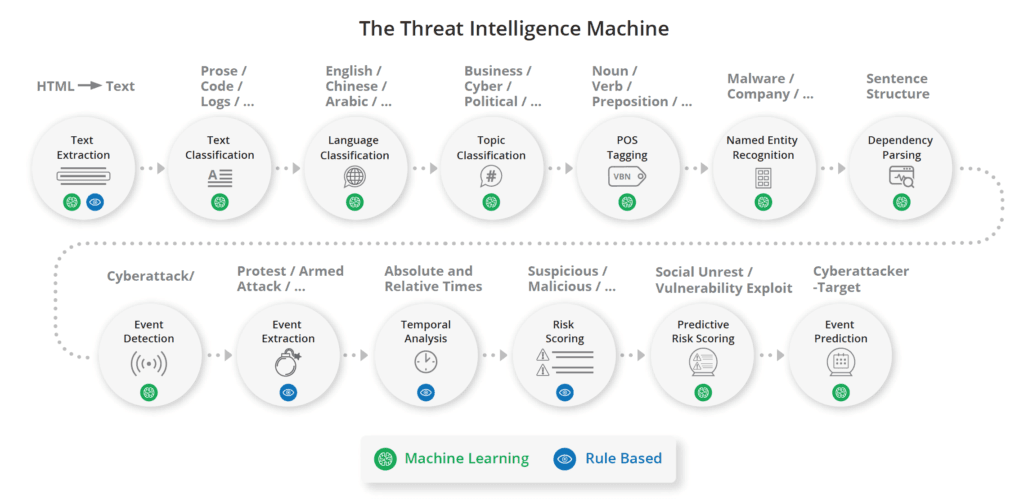

Of course, the process of turning individual data points into actionable intelligence requires a much longer sequence of steps. Recorded Future leverages machine learning, a rule-based approach, and NLP to harvest data, identify timescales, calculate risk to the organization, and even make predictions.

Rise of the Security Centaur

As we’ve already noted, the hardest part of the threat intelligence process for humans is the collection and processing of data. The type of automated process detailed above takes all that heavy lifting away, and paves the way for a “centaur” approach — human analysts making decisions based on insights produced by machines.

In order for this process to function seamlessly, machines must provide intelligence in a way that’s easy for a human analyst to understand and act on.

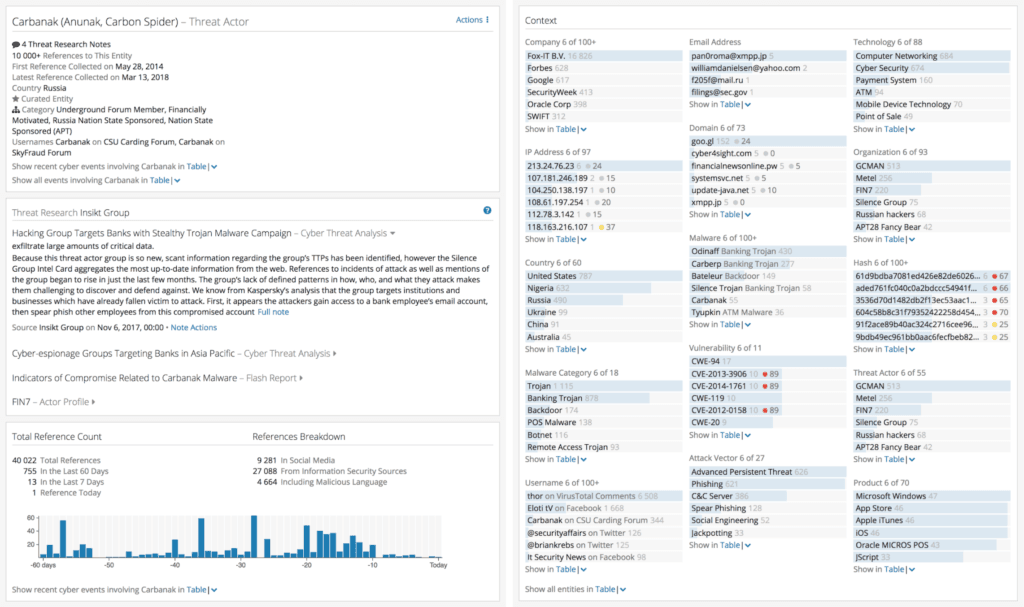

The image below, taken from Recorded Future, provides a breakdown of Carbanak, a threat actor group well-known for their banking trojans and attacks against financial services. From this view, an analyst can click through to easily find more information on a particular topic, such as types of malware commonly associated with the group.

Beyond this, Recorded Future is able to combine entities with context to deliver only relevant intelligence. The context section shown in the image above provides relevant details such as the associated domains, IP addresses, products affected, and more. A threat analyst would then have all of the necessary information to make more proactive decisions.

A retail organization being alerted to a high-profile threat group turning its attention to the retail industry, for example, would constitute an extremely valuable and actionable piece of intelligence.

Even so, this is an event rather than an alert. While it can be determined with some certainty that something needs to be done, it isn’t immediately obvious what that might be.

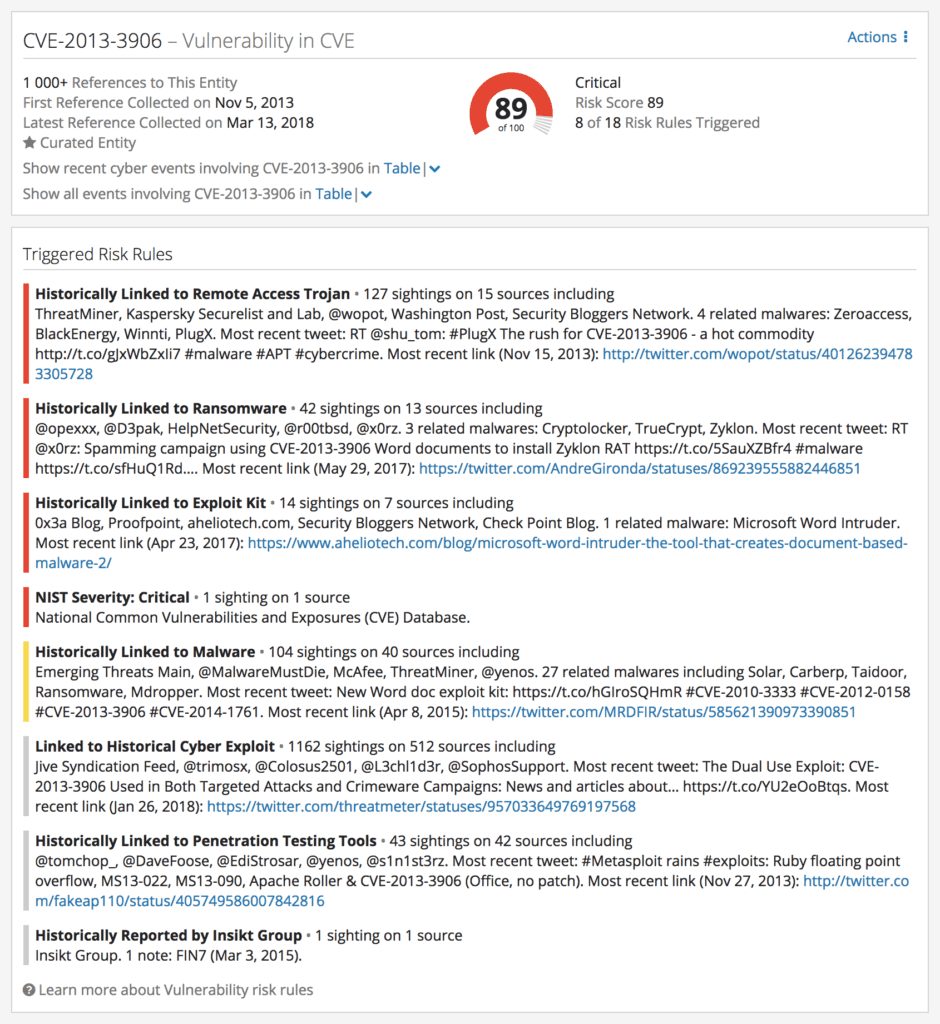

The image below, taken from Recorded Future, encapsulates an ideal output for a threat analyst. Instead of being pointed to a group, the alert flags a specific vulnerability which has seen a sharp increase in mentions, and which has been exploited by Carbanak in the past.

The vulnerability has been linked to exploit kits in the past, making it easy to abuse. When combined with Carbanak’s sudden interest in the retail industry, this all adds up to a substantial new threat to our hypothetical retail organization. At the same time, since a specific vulnerability has been flagged, it is clear what steps need to be taken.

“As I said at the beginning, the real advantages of AI and machine learning are about time and context,” Pace concludes. “Recorded Future is able to process massive amounts of raw data, irrespective of language and format, and convert it into intelligence. From there, it can predict threats and highlight the specific vulnerabilities, exploits, or actors I should be focusing on.”

When analysts have this level of context at their fingertips instantly, their ability to make timely and effective decisions is exponentially better.

To learn more about how to best utilize machine learning in your organization, read our white paper titled “4 Ways Machine Learning Is Powering Smarter Threat Intelligence.”

Related