Tips for Turning Bad Indicators of Compromise Into Good Ones

Editor’s Note: The following blog post is a summary of a presentation from RFUN 2018 featuring Adrian Porcescu, EMEA professional services manager at Recorded Future.

Good or bad, indicators beget indicators.

Often, indicators are seen only as lists of IPs, domains, and so on, without an associated context. These lists can contain a high number of false positives or otherwise irrelevant data, wasting the time of analysts trying to decipher them. In order to make indicators truly useful and timely, enrichment is key.

Here, we will look more closely at how we can make better use of indicators and their associated context in order to transform them into good indicators of compromise. We’ll also see how we can make sure that we are not starting our automation or investigations with a bad indicator of compromise — something that will waste our time and likely lead to unhelpful context — as bad indicators will beget further bad indicators. In addition, we’ll look at how cyber threat intelligence provides that crucial context needed to transform raw data into true indicators of compromise.

Indicators of Compromise and TTPs

There are several reasons to look at indicators of compromise instead of focusing on a higher part of the Pyramid of Pain, such as understanding the tactics, techniques, and procedures (TTPs) of threat actors:

- The number of TTPs made available for analysis is far less than the number of indicators that are provided by threat intelligence vendors.

- The use of TTPs requires a high level of understanding of the internal organizational structure of threat actor groups, which is usually identified and defined during threat modeling efforts.

- Security operations teams are more comfortable in, and have better capabilities around, using indicators for different courses of action and automation.

- In order to be able to focus resources on higher levels of the Pyramid of Pain, proper automation and orchestration with relevant context needs to be implemented around indicators.

We’ll start with some examples of indicators that are usually considered bad:

Google DNS Services

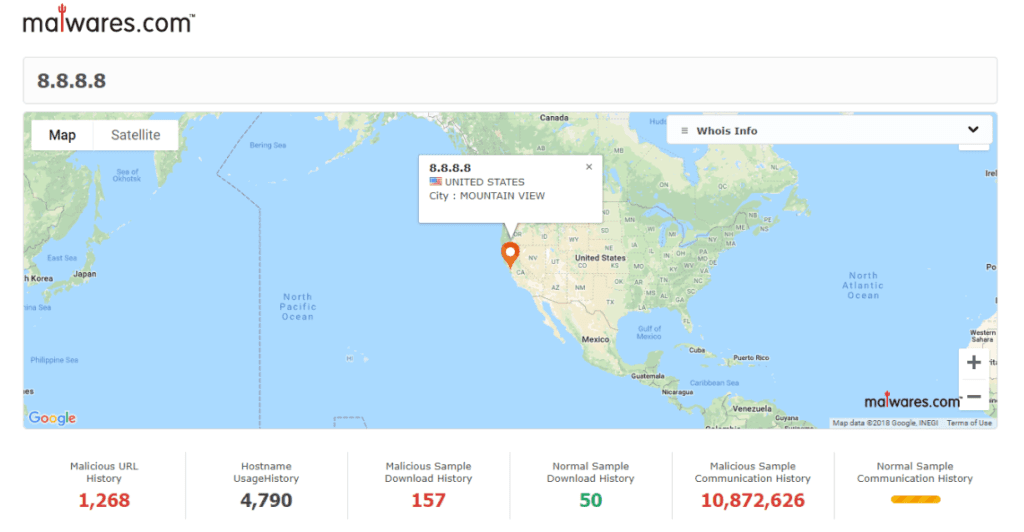

Most of the time, the following IPs are whitelisted by default, as they are considered bad indicators that should not be used for detection of potential threats. After doing some analysis, we have identified the following (at the time of this analysis):

There are nearly 11 million malicious samples connecting to 8.8.8.8.

In addition to the above finding, on a daily basis, we have found that there are tens or even hundreds of malicious samples that are submitted to VirusTotal that make use of Google DNS services.

Therefore, there is indeed a large number of potential threats that can be identified by using these indicators for detection purposes. A simple lookup within the Recorded Future platform for malware related to these indicators finds several families that make use of such services. The results of the advanced search in Recorded Future enables pivoting to further related entities, like threat actors or operations, that make use of such malware.

There are also several reasons why potential threats are making use of Google DNS services:

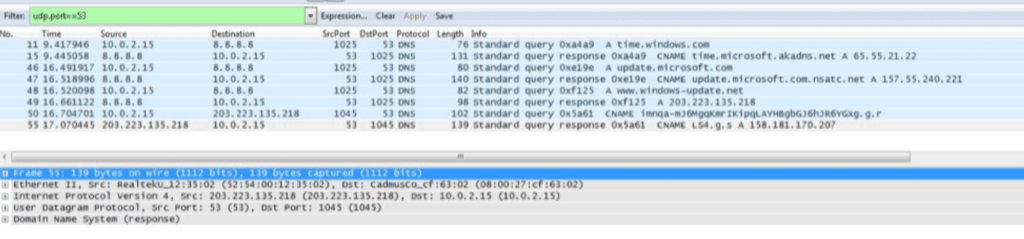

- They want to have a reliable DNS service that resolves malicious domains in case the internal domain resolution mechanism might be blocking such actions. This sometimes goes together with the obfuscation of malicious domain requests through multiple requests to legitimate domains.

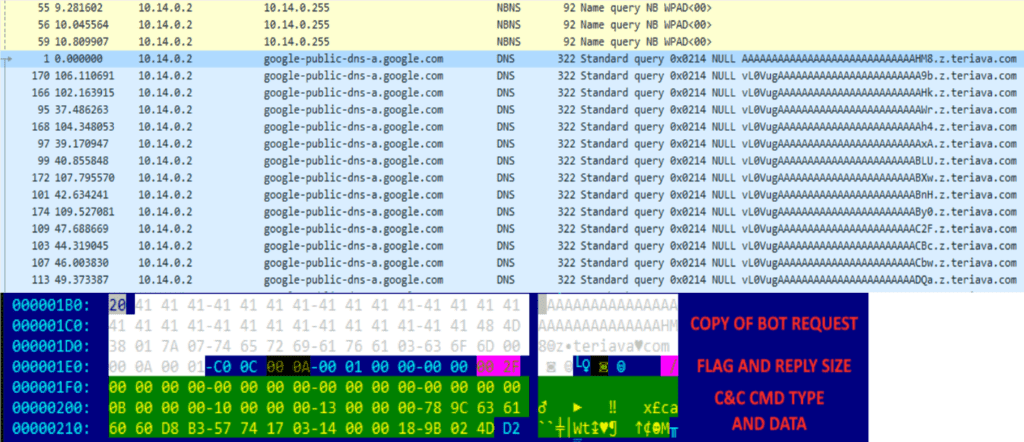

- They use DNS tunneling for communication to command and control (C2) servers, which makes use of a particular behavior of DNS services — “if you don’t know, ask somebody else.” This means that requests for addresses unknown by Google DNS will be forwarded to the corresponding domain. In this case, the domain to which the request is forwarded might respond with arbitrary strings, including C2 instructions.

An example of malicious domain resolution obfuscated between legitimate domain requests.

An example of DNS tunneling traffic.

These types of requests also suggest that connections to Google DNS services are happening in the early stages of infection by malicious samples — and being able to detect an indicator earlier on in an attack is always desirable from a defender’s point of view.

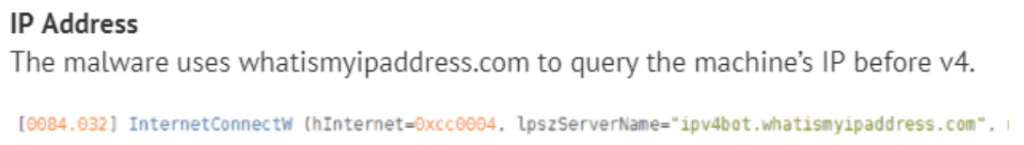

Services for Identifying Host Public IP Addresses

Analysis of three of the most used services of this type revealed several interesting facts. Many malicious samples use the following services:

- Whatismyipaddress[.]com: Around 70,000 malicious samples

- Checkip[.]dyndns[.]org: Around 60,000 malicious samples

- Whatismyip[.]com: Around 1,000 malicious samples (this is a relatively new service that started being used, so more samples are coming up)

Several important categories and families of malware are making use of the following services in order to obtain the infected system IP address for further communication:

- Ransomware: Chimera, GandCrab, CRYPTOR

- RATs: ZeroAccess, njRAT (Bladabindi), Predator Pain

- Banking Trojans: Zeus, Dyreza, Upatre

An example of a GandCrab request to whatismyipaddress[.]com.

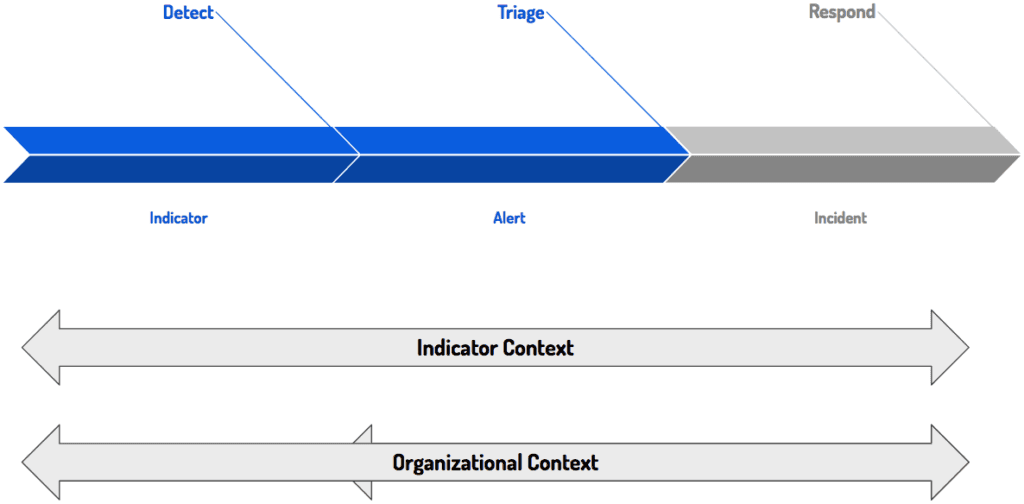

Both of these types of services can help identify a large number of malicious samples, even during early stages of infection. To use them, an organization needs to have a good understanding of their internal behavior. This will allow them to apply detection based on indicators related to Google DNS or public IP identification services against telemetry from their network that should not be using these services.

That means that one of the key aspects of turning an indicator into an indicator of compromise is the organizational context, which should guarantee whether a certain connection to a legitimate service is being made due to relevant, business-related reasons — if not, it can be an indication of a potential infected system.

Taking the example of 8.8.8.8, knowing that the owner of this IP is Google, we can decide whether we could apply it for detection by understanding what areas of our organization do not have Google as a service provider for DNS resolution. Pivoting further, we understand that in order to apply the organizational context, we need to have proper context provided around the indicators themselves.

A Bird’s Eye View of Indicators

If we take a step back and focus on what an indicator is, we can determine that it has two main components: data and context. The essence of an indicator is the data — something like an IP address or a domain. But that’s just data. It needs context from both inside your network and external sources to be a useful indicator of compromise:

- Identification Date or Activity Time: Knowing when an indicator was discovered (and if it still active) enables the identification of relevant internal telemetry to correlate against it.

- Source: Knowing where the intelligence comes from enables the identification of any perspective biases or blind angles that the organization might have.

- Owner: To determine whether an indicator follows a normal pattern of organizational behavior or stands outside of it, you must know who owns that activity so that you can communicate with them.

- Associated Threats or Tools: Knowing what threat actors or malware are related to individual indicators can help you prioritize detected events.

- Associated Attack Phases or Vectors: Like the previous point, knowing what attack phases or vectors (if any) are associated with certain indicators enables better detection and prioritization of threats.

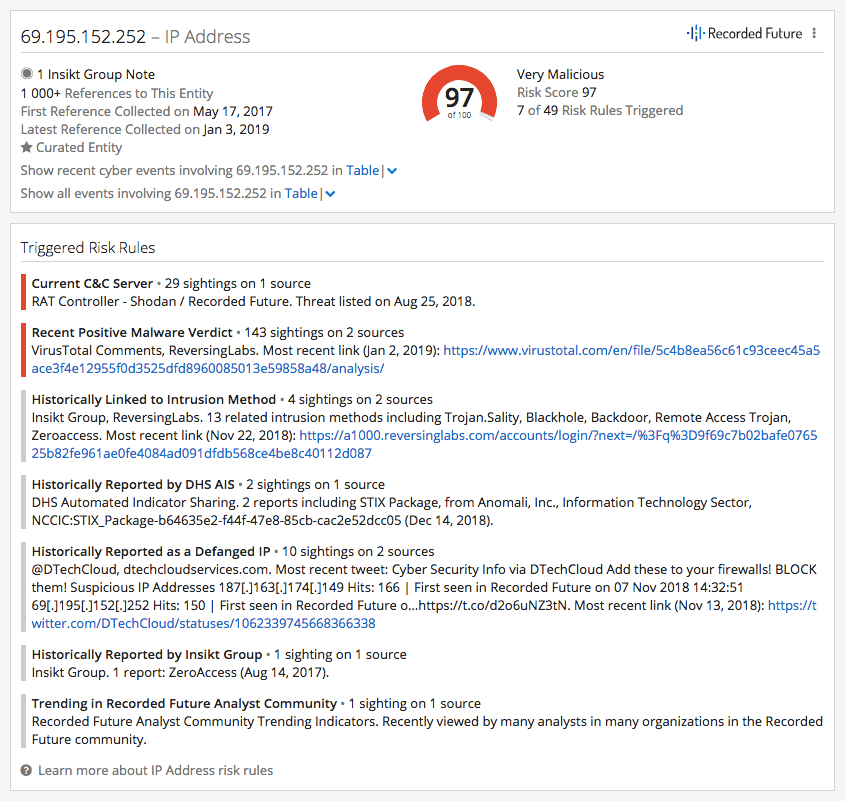

Gaining Context With Recorded Future

By utilizing Intelligence Cards™ in the Recorded Future platform, you can view information shown below to quickly find context on any indicators.

Example of a Recorded Future Intelligence Card™ and the indicator’s associated context.

As “context is king,” we recommend not only using indicator context in all phases of the cybersecurity lifecycle, but also incorporating internal network data as early as possible to develop context unique to your organization, which can more readily transform a bad indicator of compromise into a good one.

Quick Takeaways for Enriching Indicators

Context is critical and should be provided by threat intelligence vendors or requested by the consumers for every indicator. Here’s a list of recommendations to follow:

- Involve as many properties of the indicator as early on as possible in the detection and creation of correlation rules. Prioritize by risk and criticality, focus on the specific context or organizational context, and consider time.

- The indicator should never be used for detection purposes unless it has been matured via an organizational vetting process.

- The best indicators of compromise are always coming from internal investigations, so make sure you are generating your own threat intelligence and already-contextualized indicators of compromise.

- Monitor for false positives and retire the indicators that generated them.

To learn more about how threat intelligence can help enrich indicators and ultimately save analysts valuable time, request a complimentary demo today.

Related